"The best way to predict the future is to invent it." – Alan Kay

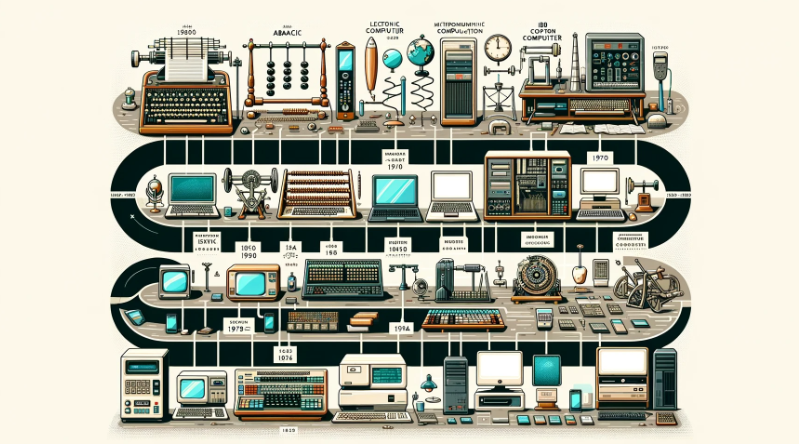

From the abacus to quantum computing, the evolution of computers is nothing short of revolutionary. The devices we now carry in our pockets—capable of processing billions of instructions per second—trace their roots to mechanical calculators, punched cards, and rooms filled with vacuum tubes. Understanding the history of computers isn’t just a trip down memory lane; it’s the foundation of modern innovation.

Consider this: the first general-purpose electronic computer, ENIAC, weighed over 27 tons. Today, your smartphone is millions of times more powerful. The leap from Charles Babbage’s Analytical Engine to the latest AI-driven machines is a story of ingenuity, ambition, and relentless problem-solving.

But computers weren’t just built for convenience. They were born out of necessity—war-time codebreaking, complex calculations, and automation of human tasks. Whether it was Ada Lovelace pioneering programming, Alan Turing envisioning intelligent machines, or IBM transforming business computing, every milestone in computing history paved the way for today’s digital landscape.

In this article, we’ll dive into the defining moments, from the Antikythera Mechanism to the rise of the Internet, from the microprocessor boom to the dawn of artificial intelligence. The history of computing isn’t just about machines; it’s about people—visionaries who saw beyond their time and dared to push the limits of what was possible.

Let’s explore how computers evolved, shaped industries, and redefined the way we live, work, and think.

What is a Computer?

Historically, the term "computer" referred to a human who performed numerical calculations, often using mechanical calculating devices. With the advent of electronic machines, the term shifted to describe automated computing devices.

In modern usage, a computer can refer to various devices, from traditional desktop PCs to laptops, smartphones, tablets, and even embedded systems in appliances and machinery. Despite differences in form factor and purpose, all computers share a fundamental function:

A computer is an electronic machine that:

- Collects information (input).

- Stores and processes data based on user instructions.

- Outputs results in a meaningful way.

At its core, a computer executes arithmetic and logical operations using a set of programmed instructions, making it a powerful tool for solving complex problems, automating tasks, and enhancing productivity.

Primitive Computing and Ancient Civilizations

The history of computing is deeply rooted in human civilization. Long before modern computers, early societies developed tools to simplify calculations. From counting with sticks and stones to using the abacus, these primitive methods laid the foundation for computing as we know it today.

Sticks and Stones: The Earliest Computing Tools

Before the development of structured counting systems, early humans relied on sticks, bones, and stones to track numbers. This method evolved into tally marks, which were used for trade, taxation, and record-keeping.

The Abacus: A Universal Counting Device

One of the first real computing devices, the abacus, appeared in ancient Mesopotamia and later spread to China, Japan, and Europe. It consists of beads that slide on rods, allowing users to perform basic arithmetic operations. Variants include:

- Chinese Suanpan – Two beads above, five below the divider.

- Japanese Soroban – One bead above, four below.

- Russian Schoty – A simpler design with ten beads per rod.

The abacus remained a primary tool for merchants and scholars for centuries, proving its effectiveness in manual calculations.

Ancient Egyptian and Babylonian Mathematics

Two of the most influential ancient civilizations, the Egyptians and Babylonians, developed early mathematical systems that influenced future computing advancements:

- Egyptians – Used a decimal system and developed geometry for architecture, such as pyramid construction.

- Babylonians – Created a base-60 (sexagesimal) system, which we still use for time (60 minutes per hour) and angles (360 degrees in a circle).

Their advancements in algebra, geometry, and fractions laid the groundwork for modern mathematics and computing.

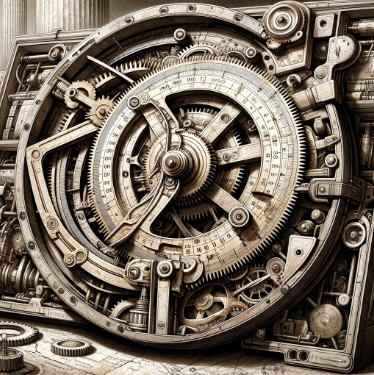

The Antikythera Mechanism: Ancient Greece's "Computer"

Discovered in a shipwreck near the Greek island of Antikythera in 1901, this 2nd-century BCE device is often considered the world’s first analog computer.

Featuring over 30 bronze gears, it was used to predict eclipses, track planetary movements, and model astronomical cycles. The complexity of this device showcases the advanced engineering skills of ancient Greek scientists.

These early tools and techniques show that the desire to simplify calculations and understand the world through numbers has been a constant throughout history. They set the stage for more sophisticated computing devices to come.

Mechanical Wonders of the Renaissance and the Enlightenment

The period from the 14th to the 18th century was a time of immense scientific growth and exploration. As scholars sought to refine mathematical and computational methods, mechanical devices emerged to aid in calculations. These inventions paved the way for the future of programmable machines.

Pascal’s Pascaline: The First Mechanical Calculator

In the 1640s, French mathematician Blaise Pascal invented the Pascaline, one of the earliest mechanical calculators. Designed to help his father with tax calculations, the Pascaline could perform addition and subtraction through a system of gears and dials.

While limited in function, the Pascaline was a significant leap in automated computation, showing that machines could be designed to handle mathematical operations.

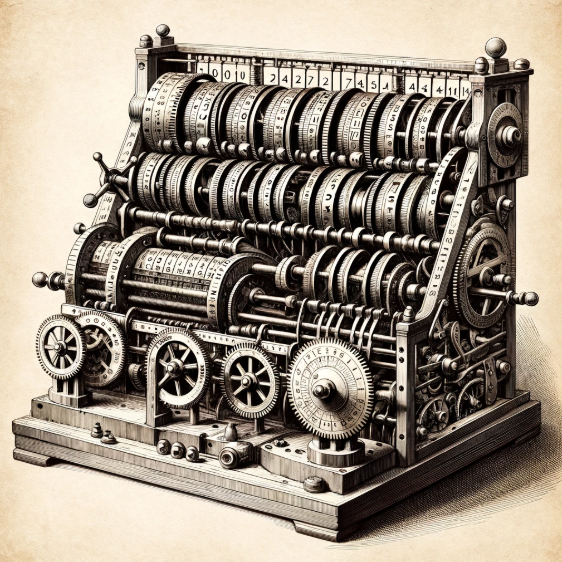

Leibniz’s Stepped Reckoner: Expanding Mechanical Computation

Inspired by Pascal’s work, German polymath Gottfried Wilhelm Leibniz developed the Stepped Reckoner in the 1670s. Unlike the Pascaline, the Stepped Reckoner could perform multiplication and division using a stepped drum mechanism.

Leibniz was also an early advocate of binary numbers, an essential concept in modern computing. His vision of a universal system of computation anticipated today’s digital logic.

Babbage’s Analytical Engine: The First Programmable Computer

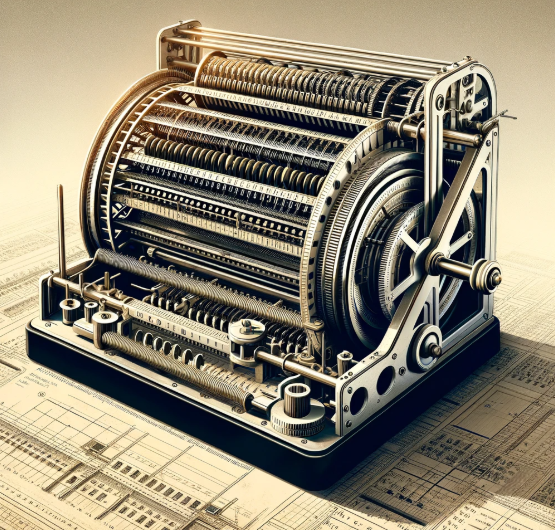

In the 19th century, British mathematician Charles Babbage designed the Analytical Engine, a mechanical device that could perform a wide range of calculations. Unlike its predecessors, it was programmable using punched cards, a method borrowed from the textile industry.

The Analytical Engine included components resembling modern computers:

- The Store – Functioned like memory for storing numbers.

- The Mill – Performed arithmetic calculations (similar to a CPU).

- Punched Cards – Provided instructions for operation.

Ada Lovelace: The First Computer Programmer

Babbage’s collaborator, Ada Lovelace, is considered the first computer programmer. She recognized that the Analytical Engine could do more than just crunch numbers—it could manipulate symbols and generate complex sequences.

In her notes, she wrote what is considered the first algorithm intended for execution by a machine. Her vision foreshadowed the idea of general-purpose computing.

These pioneering inventions proved that machines could process information, setting the foundation for modern computing principles.

The 20th Century: Dawn of the Electronic Age

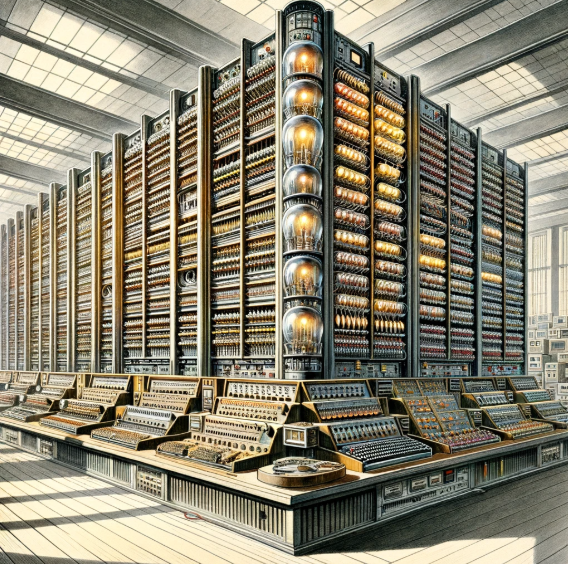

The 20th century marked a major transformation in computing. Computers evolved from mechanical devices to powerful electronic machines, enabling faster and more complex calculations. This period saw the invention of vacuum tube computers, the Turing Machine, and early general-purpose electronic computers like ENIAC.

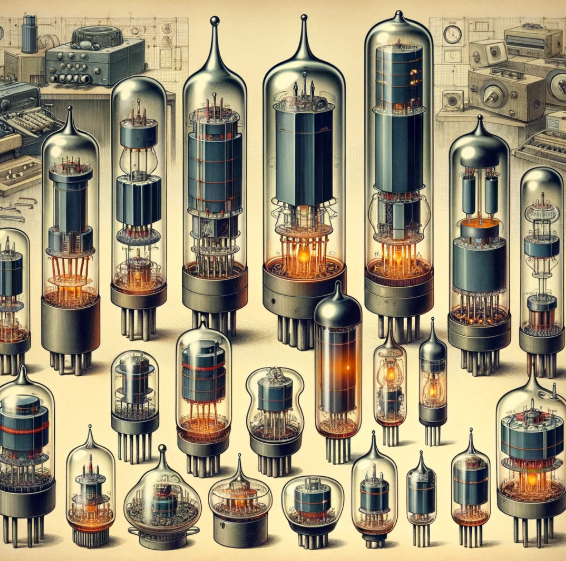

The Development of the Vacuum Tube

In 1904, British scientist John Ambrose Fleming introduced the vacuum tube (thermionic valve). Initially used for converting electrical signals in radios, it soon became an essential component in early electronic computers.

The vacuum tube functioned as an electronic switch, replacing mechanical relays. This led to faster computation and allowed the development of fully electronic computers.

Alan Turing and the Concept of the Turing Machine

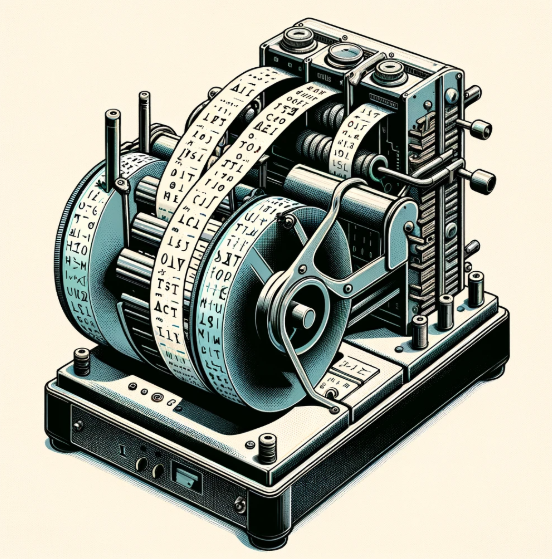

In the 1930s, British mathematician Alan Turing introduced the Turing Machine, a theoretical model that defined the principles of computation.

The Turing Machine could read, write, and modify symbols on an infinite tape based on a set of rules. This concept laid the foundation for modern computing theory and proved that any computational problem could be solved using an algorithm.

During World War II, Turing worked at Bletchley Park, where he played a crucial role in breaking the German Enigma code using the Bombe machine, an early form of an automated decryption device.

ENIAC: The First General-Purpose Electronic Computer

In 1945, the Electronic Numerical Integrator and Computer (ENIAC) became the world’s first general-purpose, fully electronic digital computer. Developed by John Mauchly and J. Presper Eckert in the United States, ENIAC was built to assist in ballistic calculations for the U.S. Army.

ENIAC’s key features:

- Used 17,468 vacuum tubes to process calculations.

- Weighed over 27 tons and occupied an entire room.

- Performed 5,000 additions per second, much faster than previous mechanical computers.

Despite its massive size, ENIAC demonstrated that electronic computers were significantly faster than mechanical ones. It paved the way for modern computing and inspired future developments in digital processing.

The Mainframe Era and the Birth of UNIVAC

Following ENIAC, the UNIVersal Automatic Computer (UNIVAC) was developed in 1951 by Mauchly and Eckert as the first commercially available computer.

UNIVAC gained widespread attention when it correctly predicted the 1952 U.S. presidential election results, showcasing the power of computers in data analysis.

The success of UNIVAC led to the rise of mainframe computers, which were large, powerful machines used by corporations, government agencies, and research institutions. Companies like IBM dominated this era, producing mainframes that processed vast amounts of data for business and scientific applications.

The dawn of the electronic age revolutionized computing, leading to the development of smaller, faster, and more efficient computers that shaped the digital world we live in today.

Innovations in Personal Computing

The latter half of the 20th century saw a dramatic shift in computing. From large, room-filling mainframes, we transitioned to personal computers (PCs)—compact, affordable machines that individuals and businesses could use daily. This revolution was driven by advancements in microprocessors, operating systems, and visionary tech companies.

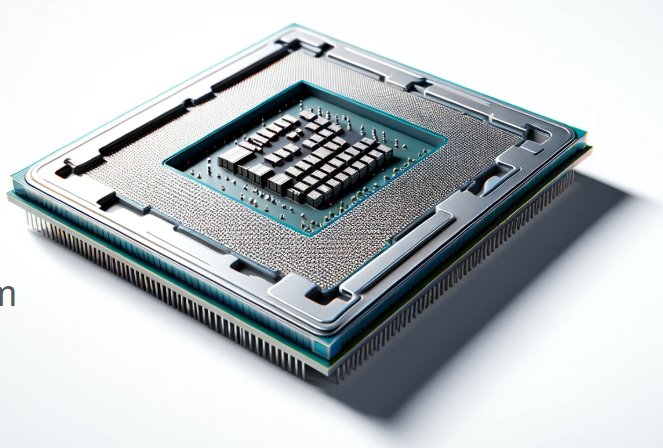

The Birth of Microprocessors

In 1971, Intel introduced the 4004 microprocessor, the first commercially available single-chip CPU. This breakthrough allowed an entire processing unit to be placed on a single silicon chip, reducing computer size and cost.

Following this, the release of the Intel 8080 in 1974 and the Intel 8086 in 1978 laid the groundwork for the architecture of modern PCs. The ability to integrate multiple computing functions into a small chip made computers more accessible to individuals and businesses.

The Rise of Apple, Microsoft, and IBM Personal Computers

The personal computing revolution was spearheaded by three major players: Apple, Microsoft, and IBM.

- Apple: Founded by Steve Jobs and Steve Wozniak in 1976, Apple introduced the Apple I and later the Apple II, which became one of the first mass-produced personal computers.

- IBM: In 1981, IBM released the IBM Personal Computer (IBM PC), which standardized PC hardware and led to a boom in the personal computing market.

- Microsoft: Around the same time, Microsoft, co-founded by Bill Gates and Paul Allen, developed the MS-DOS operating system, which became the foundation for many PCs.

These companies shaped the modern computing industry, creating systems that defined how people interacted with technology.

The Evolution of Operating Systems

As personal computers became more powerful, operating systems (OS) evolved to improve usability. The introduction of graphical user interfaces (GUIs) transformed how users interacted with computers.

- MS-DOS (1981): A command-line operating system that laid the foundation for Microsoft Windows.

- Apple Macintosh (1984): One of the first personal computers with a GUI, making computers more user-friendly.

- Windows (1985): Microsoft introduced Windows as a GUI layer for MS-DOS, eventually becoming the dominant OS for PCs.

These innovations in operating systems made computing more accessible to the general public, leading to the explosion of personal computer usage in homes and businesses worldwide.

The rise of personal computing changed how people work, communicate, and innovate. With the power of microprocessors, operating systems, and software, computers transitioned from niche tools for experts to indispensable devices for everyone.

The Age of Connectivity: Internet and the World Wide Web

As personal computers became widespread, another technological breakthrough transformed the world: the internet. The ability to connect computers across vast distances revolutionized communication, commerce, and information sharing.

The Birth of the Internet

The origins of the internet trace back to the Advanced Research Projects Agency Network (ARPANET), a project initiated by the U.S. Department of Defense in the 1960s. ARPANET aimed to create a decentralized communication network that could withstand disruptions.

Key milestones in internet development:

- 1969: The first successful ARPANET message was sent between UCLA and Stanford.

- 1973: ARPANET expanded internationally, connecting to networks in the United Kingdom and Norway.

- 1983: The adoption of the TCP/IP protocol standardized how computers communicate over networks, forming the foundation of today’s internet.

The World Wide Web and Tim Berners-Lee

While the internet allowed computers to communicate, it was the World Wide Web (WWW) that made information easily accessible. In 1989, British computer scientist Tim Berners-Lee proposed a system for sharing documents using hyperlinks and web browsers.

By 1991, Berners-Lee had developed the first web browser and web server, making it possible to browse and publish content online. This breakthrough paved the way for the modern internet experience.

The Rise of Search Engines and E-Commerce

As the web grew, the need to organize and search for information became critical. This led to the rise of search engines, which made navigating the web more efficient.

- 1994: Yahoo! was founded as one of the first web directories.

- 1998: Google launched, revolutionizing search with its PageRank algorithm.

- 2004-2005: Facebook and YouTube emerged, shaping the era of social networking and video content.

Alongside search engines, the internet also enabled e-commerce, allowing businesses to sell products online. Companies like Amazon (1994) and eBay (1995) pioneered online shopping, transforming global commerce.

The internet and World Wide Web created an interconnected world, giving rise to digital businesses, social media, and a global information economy. Today, everything from education to entertainment is shaped by this technology.

The Ongoing Evolution of Computers

From ancient counting tools to advanced artificial intelligence, the history of computers is a story of continuous innovation. Each era has built upon the discoveries of the past, transforming computing into an essential part of modern life.

The journey has taken us from the abacus to the microprocessor, from the first mainframes to today’s AI-powered devices. Computing has enabled breakthroughs in science, medicine, communication, and countless other fields.

As we move forward, the next frontiers of technology—quantum computing, artificial general intelligence (AGI), and human-machine integration—promise even greater advancements. The computers of tomorrow will not just process information; they will think, predict, and evolve in ways we are only beginning to understand.

The story of computing is far from over. As technology advances, we remain on the brink of new discoveries that will shape the future for generations to come.

Test Your Knowledge

Ready to assess what you've learned? Take the History of Computers Online Assessment here.

Continue Learning

Related Topics

If you're interested in learning more, check out: Introduction to Python.

No comments yet. Be the first to share your thoughts!